Underdetermined Blind Source Separation using Binary Time-Frequency Masking with Variable Frequency Resolution

![PDF] Speech intelligibility in background noise with ideal binary time-frequency masking. | Semantic Scholar PDF] Speech intelligibility in background noise with ideal binary time-frequency masking. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/3c6dfe9b91f3cd86d21c51b97e8a92f883d1578c/4-Figure1-1.png)

PDF] Speech intelligibility in background noise with ideal binary time-frequency masking. | Semantic Scholar

![PDF] Single channel speech enhancement using ideal binary mask technique based on computational auditory scene analysis | Semantic Scholar PDF] Single channel speech enhancement using ideal binary mask technique based on computational auditory scene analysis | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/15a96ae516ec40820adc2789c00249a98fa4d98e/5-Figure2-1.png)

PDF] Single channel speech enhancement using ideal binary mask technique based on computational auditory scene analysis | Semantic Scholar

The benefit of combining a deep neural network architecture with ideal ratio mask estimation in computational speech segregation to improve speech intelligibility | PLOS ONE

An Auditory Scene Analysis Approach to Speech Segregation DeLiang Wang Perception and Neurodynamics Lab The Ohio State University. - ppt download

Time-Frequency Masking Based Online Multi-Channel Speech Enhancement With Convolutional Recurrent Neural Networks | Soumitro Chakrabarty

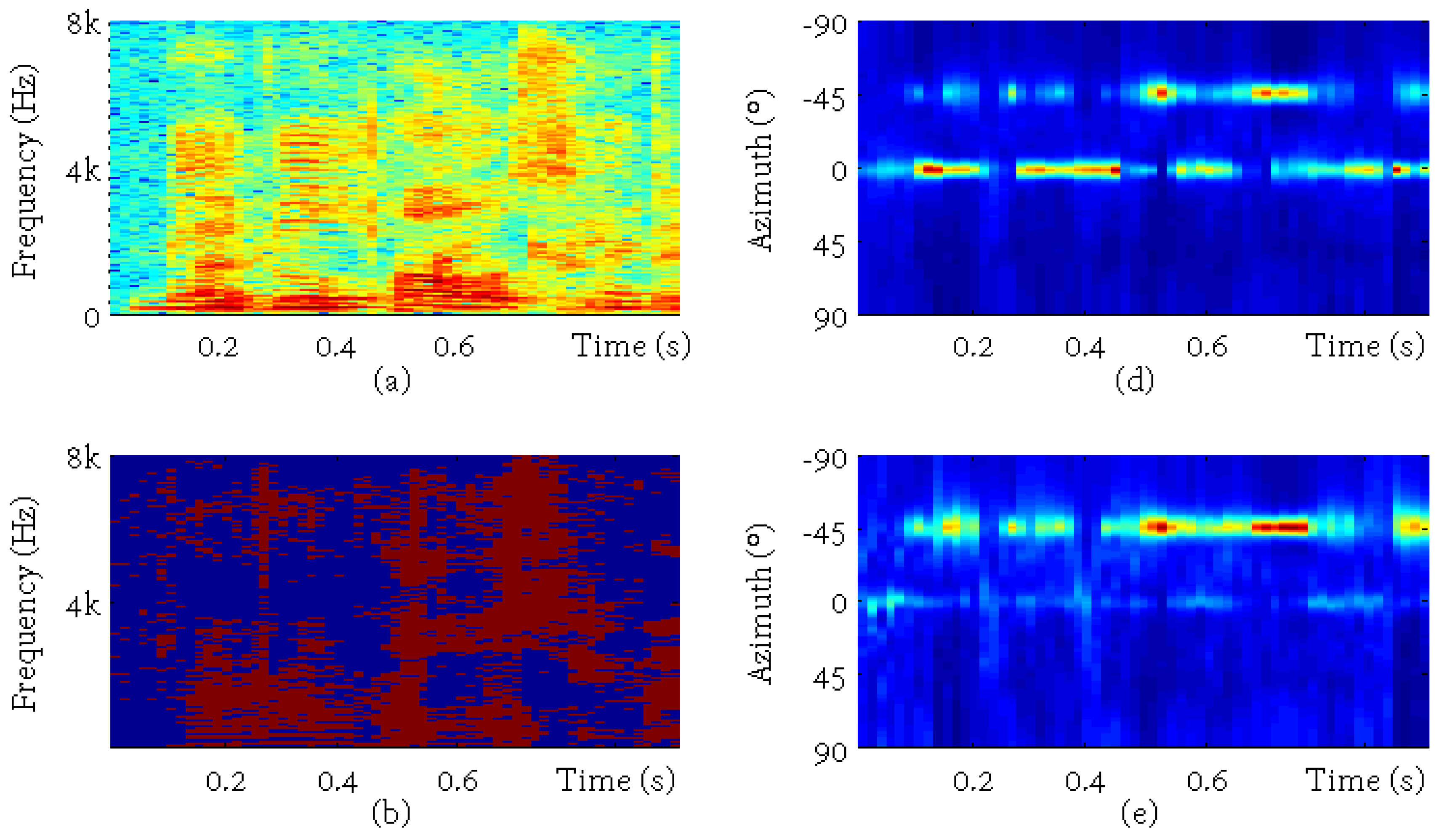

Applied Sciences | Free Full-Text | Target Speaker Localization Based on the Complex Watson Mixture Model and Time-Frequency Selection Neural Network

The benefit of combining a deep neural network architecture with ideal ratio mask estimation in computational speech segregation to improve speech intelligibility | PLOS ONE